เผยแพร่: 6 กุมภาพันธ์ 2019, อัปเดตล่าสุด: 5 มกราคม 2026

การตัดสินใจหลักอย่างหนึ่งที่นักพัฒนาเว็บต้องทำคือการเลือกตำแหน่งที่จะใช้ตรรกะ และการแสดงผลในแอปพลิเคชัน ซึ่งอาจเป็นเรื่องยากเนื่องจากมีวิธีสร้างเว็บไซต์มากมาย

ความเข้าใจของเราเกี่ยวกับพื้นที่นี้มาจากการทำงานใน Chrome ที่พูดคุยกับเว็บไซต์ขนาดใหญ่ในช่วง 2-3 ปีที่ผ่านมา โดยทั่วไป เราขอแนะนำให้นักพัฒนาซอฟต์แวร์ พิจารณาการแสดงผลฝั่งเซิร์ฟเวอร์หรือการแสดงผลแบบคงที่แทนแนวทางการ Hydration แบบเต็ม

เพื่อให้เข้าใจสถาปัตยกรรมที่เราเลือกได้ดียิ่งขึ้นเมื่อทำการตัดสินใจนี้ เราจึงต้องมีคำศัพท์ที่สอดคล้องกันและเฟรมเวิร์กที่ใช้ร่วมกัน สำหรับแต่ละแนวทาง จากนั้นคุณจะประเมินข้อดีข้อเสียของวิธีการแสดงผลแต่ละวิธีได้ดีขึ้น จากมุมมองของประสิทธิภาพหน้าเว็บ

คำศัพท์

ก่อนอื่น เราจะกำหนดคำศัพท์บางคำที่เราจะใช้

การแสดงผล

- การแสดงผลฝั่งเซิร์ฟเวอร์ (SSR)

- การแสดงผลแอปในเซิร์ฟเวอร์เพื่อส่ง HTML แทน JavaScript ไปยังไคลเอ็นต์

- การแสดงผลฝั่งไคลเอ็นต์ (CSR)

- การแสดงผลแอปในเบราว์เซอร์โดยใช้ JavaScript เพื่อแก้ไข DOM

- การแสดงผลล่วงหน้า

- เรียกใช้แอปพลิเคชันฝั่งไคลเอ็นต์ในเวลาสร้างเพื่อบันทึกสถานะเริ่มต้นเป็น HTML แบบคงที่ โปรดทราบว่า "การแสดงผลล่วงหน้า" ในที่นี้แตกต่างจากการแสดงผลล่วงหน้าของเบราว์เซอร์สำหรับการไปยังส่วนต่างๆ ในอนาคต

- ปริมาณน้ำที่ดื่ม

- การเรียกใช้สคริปต์ฝั่งไคลเอ็นต์เพื่อเพิ่มสถานะแอปพลิเคชันและการโต้ตอบลงใน HTML ที่แสดงผลฝั่งเซิร์ฟเวอร์ Hydration จะถือว่า DOM ไม่เปลี่ยนแปลง

- การคืนน้ำให้ร่างกาย

- แม้ว่ามักจะใช้ในความหมายเดียวกันกับการไฮเดรต แต่การรีไฮเดรตหมายถึงการอัปเดต DOM เป็นประจำด้วยสถานะล่าสุด รวมถึงหลังจากไฮเดรตครั้งแรก

ประสิทธิภาพ

- เวลาที่ได้รับข้อมูลไบต์แรก (TTFB)

- เวลาตั้งแต่คลิกลิงก์จนถึงเวลาที่เนื้อหาไบต์แรกโหลดใน หน้าใหม่

- First Contentful Paint (FCP)

- เวลาที่เนื้อหาที่ขอ (เนื้อหาของบทความ ฯลฯ) ปรากฏให้เห็น

- Interaction to Next Paint (INP)

- เมตริกตัวแทนที่ประเมินว่าหน้าเว็บตอบสนองต่ออินพุตของผู้ใช้อย่างรวดเร็วอย่างสม่ำเสมอหรือไม่

- เวลาในการบล็อกทั้งหมด (TBT)

- เมตริกพร็อกซีสำหรับ INP ที่คำนวณระยะเวลาที่เทรดหลักถูกบล็อกระหว่างการโหลดหน้าเว็บ

การแสดงผลฝั่งเซิร์ฟเวอร์

การแสดงผลฝั่งเซิร์ฟเวอร์จะสร้าง HTML แบบเต็มสําหรับหน้าเว็บในเซิร์ฟเวอร์เพื่อตอบสนองต่อการนําทาง ซึ่งจะช่วยหลีกเลี่ยงการรับส่งข้อมูลเพิ่มเติมและการสร้างเทมเพลตในไคลเอ็นต์ เนื่องจากตัวแสดงผลจะจัดการสิ่งเหล่านี้ก่อนที่เบราว์เซอร์จะได้รับการตอบกลับ

โดยทั่วไปแล้วการแสดงผลฝั่งเซิร์ฟเวอร์จะทำให้ FCP เร็ว การเรียกใช้ตรรกะของหน้าเว็บและ การแสดงผลบนเซิร์ฟเวอร์ช่วยให้คุณไม่ต้องส่ง JavaScript จำนวนมากไปยังไคลเอ็นต์ ซึ่งจะช่วยลด TTBT ของหน้าเว็บ ซึ่งอาจส่งผลให้ INP ลดลงด้วย เนื่องจาก เธรดหลักจะไม่ถูกบล็อกบ่อยเท่าเดิมในระหว่างการโหลดหน้าเว็บ เมื่อมีการบล็อกเทรดหลักน้อยลง การโต้ตอบของผู้ใช้ก็จะมีโอกาสทำงานได้เร็วขึ้น

ซึ่งก็สมเหตุสมผลแล้ว เพราะการแสดงผลฝั่งเซิร์ฟเวอร์เป็นการส่ง ข้อความและลิงก์ไปยังเบราว์เซอร์ของผู้ใช้จริงๆ วิธีนี้ใช้ได้ดีกับสภาพอุปกรณ์และเครือข่ายที่หลากหลาย และเปิดโอกาสให้เพิ่มประสิทธิภาพเบราว์เซอร์ได้อย่างน่าสนใจ เช่น การแยกวิเคราะห์เอกสารแบบสตรีมมิง

การแสดงผลฝั่งเซิร์ฟเวอร์ช่วยลดโอกาสที่ผู้ใช้จะต้องรอให้ JavaScript ที่ทำงานหนักบน CPU ทำงานก่อนจึงจะใช้เว็บไซต์ได้ แม้ว่าคุณจะหลีกเลี่ยง JavaScript ของบุคคลที่สามไม่ได้ การใช้การแสดงผลฝั่งเซิร์ฟเวอร์เพื่อลดต้นทุน JavaScript ของคุณเอง จะช่วยให้คุณมีงบประมาณ มากขึ้นสำหรับส่วนอื่นๆ อย่างไรก็ตาม วิธีนี้อาจมีข้อเสียประการหนึ่งคือ การสร้างหน้าเว็บในเซิร์ฟเวอร์ต้องใช้เวลา ซึ่งอาจเพิ่ม TTFB ของหน้าเว็บ

การแสดงผลฝั่งเซิร์ฟเวอร์เพียงพอสำหรับแอปพลิเคชันของคุณหรือไม่นั้นขึ้นอยู่กับ ประเภทของประสบการณ์ที่คุณกำลังสร้างเป็นส่วนใหญ่ มีการถกเถียงกันมานานเกี่ยวกับการใช้การแสดงผลฝั่งเซิร์ฟเวอร์กับการแสดงผลฝั่งไคลเอ็นต์อย่างถูกต้อง แต่คุณเลือกใช้การแสดงผลฝั่งเซิร์ฟเวอร์สำหรับบางหน้าและไม่ใช้สำหรับหน้าอื่นๆ ได้เสมอ บางเว็บไซต์ใช้เทคนิคการแสดงผลแบบไฮบริดได้สำเร็จ ตัวอย่างเช่น Netflix แสดงผลฝั่งเซิร์ฟเวอร์สำหรับหน้า Landing Page ที่ค่อนข้างคงที่ ขณะเดียวกันก็prefetching ของ JavaScript สำหรับหน้าเว็บที่มีการโต้ตอบสูง ซึ่งจะช่วยให้หน้าเว็บที่แสดงผลฝั่งไคลเอ็นต์ซึ่งมีขนาดใหญ่ขึ้นเหล่านี้มีโอกาสโหลดได้เร็วขึ้น

เฟรมเวิร์ก ไลบรารี และสถาปัตยกรรมสมัยใหม่จำนวนมากช่วยให้คุณแสดงผลแอปพลิเคชันเดียวกันได้ทั้งในไคลเอ็นต์และเซิร์ฟเวอร์ คุณใช้เทคนิคเหล่านี้สำหรับการแสดงผลฝั่งเซิร์ฟเวอร์ได้ อย่างไรก็ตาม สถาปัตยกรรมที่การแสดงผลเกิดขึ้นทั้งในเซิร์ฟเวอร์และในไคลเอ็นต์เป็นโซลูชันอีกประเภทหนึ่งที่มีลักษณะด้านประสิทธิภาพและข้อแลกเปลี่ยนที่แตกต่างกันมาก ผู้ใช้ React สามารถใช้ Server DOM API หรือโซลูชัน ที่สร้างขึ้นบน API เหล่านี้ เช่น Next.js สำหรับการแสดงผลฝั่งเซิร์ฟเวอร์ ผู้ใช้ Vue สามารถใช้คู่มือการแสดงผลฝั่งเซิร์ฟเวอร์ของ Vue หรือ Nuxt Angular มี Universal

โซลูชันยอดนิยมส่วนใหญ่ใช้การเติมน้ำในรูปแบบใดรูปแบบหนึ่ง ดังนั้นโปรดทราบถึง วิธีการที่เครื่องมือของคุณใช้

การแสดงผลแบบคงที่

การแสดงผลแบบคงที่ จะเกิดขึ้นในเวลาบิลด์ แนวทางนี้ช่วยให้ FCP รวดเร็วขึ้น รวมถึงลด TBT และ INP ด้วย ตราบใดที่คุณจำกัดปริมาณ JavaScript ฝั่งไคลเอ็นต์ในหน้าเว็บ นอกจากนี้ ยังช่วยให้ TTFB รวดเร็วอย่างสม่ำเสมอด้วย เนื่องจาก HTML ของหน้าเว็บไม่จำเป็นต้องสร้างแบบไดนามิกบนเซิร์ฟเวอร์ ซึ่งแตกต่างจากการแสดงผลฝั่งเซิร์ฟเวอร์ โดยทั่วไปแล้ว การแสดงผลแบบคงที่หมายถึงการสร้างไฟล์ HTML แยกต่างหากสำหรับแต่ละ URL ล่วงหน้า เมื่อสร้างการตอบกลับ HTML ล่วงหน้า คุณจะสามารถติดตั้งใช้งานการแสดงผลแบบคงที่กับ CDN หลายรายการเพื่อใช้ประโยชน์จากการแคชที่ขอบ

โซลูชันสำหรับการแสดงผลแบบคงที่นั้นมีหลากหลายรูปแบบและขนาด เครื่องมืออย่าง Gatsby ออกแบบมาเพื่อให้นักพัฒนาแอปรู้สึกว่า แอปพลิเคชันของตนได้รับการแสดงผลแบบไดนามิก ไม่ได้สร้างขึ้นเป็นขั้นตอนการสร้าง เครื่องมือสร้างเว็บไซต์แบบคงที่ เช่น 11ty, Jekyll และ Metalsmith ใช้ลักษณะแบบคงที่ของตนเองเพื่อมอบแนวทางที่เน้นเทมเพลตมากขึ้น

ข้อเสียอย่างหนึ่งของการแสดงผลแบบคงที่คือต้องสร้างไฟล์ HTML แยกกันสำหรับ URL ที่เป็นไปได้ทั้งหมด ซึ่งอาจเป็นเรื่องท้าทายหรือเป็นไปไม่ได้ เมื่อคุณต้องคาดการณ์ URL เหล่านั้นล่วงหน้าและสำหรับเว็บไซต์ที่มี หน้าเว็บที่ไม่ซ้ำกันจำนวนมาก

ผู้ใช้ React อาจคุ้นเคยกับ Gatsby, การส่งออกแบบคงที่ของ Next.js หรือ Navi ซึ่งทั้งหมดนี้ช่วยให้สร้าง หน้าเว็บจากคอมโพเนนต์ได้อย่างสะดวก อย่างไรก็ตาม การแสดงผลแบบคงที่และการแสดงผลล่วงหน้าจะทำงานแตกต่างกัน โดยหน้าเว็บที่แสดงผลแบบคงที่จะโต้ตอบได้โดยไม่ต้องเรียกใช้ JavaScript ฝั่งไคลเอ็นต์มากนัก ในขณะที่การแสดงผลล่วงหน้าจะช่วยปรับปรุง FCP ของ Single Page Application ซึ่งต้องบูตในไคลเอ็นต์เพื่อให้หน้าเว็บโต้ตอบได้อย่างแท้จริง

หากไม่แน่ใจว่าโซลูชันที่ต้องการใช้เป็นการแสดงผลแบบคงที่หรือการแสดงผลล่วงหน้า ให้ลองปิดใช้ JavaScript แล้วโหลดหน้าเว็บที่ต้องการทดสอบ สำหรับหน้าที่แสดงผลแบบคงที่ ฟีเจอร์แบบอินเทอร์แอกทีฟส่วนใหญ่ยังคงมีอยู่โดยไม่ต้องใช้ JavaScript หน้าเว็บที่แสดงผลล่วงหน้าอาจยังมีฟีเจอร์พื้นฐานบางอย่าง เช่น ลิงก์ที่ปิดใช้ JavaScript แต่ส่วนใหญ่ของหน้าเว็บจะไม่มีการโต้ตอบ

การทดสอบที่มีประโยชน์อีกอย่างคือการใช้การควบคุมปริมาณเครือข่ายในเครื่องมือสำหรับนักพัฒนาเว็บใน Chrome และดูว่ามีการดาวน์โหลด JavaScript มากน้อยเพียงใดก่อนที่หน้าเว็บจะโต้ตอบได้ โดยทั่วไปแล้ว การแสดงผลล่วงหน้าต้องใช้ JavaScript มากขึ้นเพื่อให้โต้ตอบได้ และ JavaScript นั้นมักจะซับซ้อนกว่าแนวทางการเพิ่มประสิทธิภาพแบบค่อยเป็นค่อยไป ที่ใช้ในการแสดงผลแบบคงที่

การแสดงผลฝั่งเซิร์ฟเวอร์กับการแสดงผลแบบคงที่

การแสดงผลฝั่งเซิร์ฟเวอร์ไม่ใช่โซลูชันที่ดีที่สุดสำหรับทุกสิ่ง เนื่องจากลักษณะแบบไดนามิกอาจทำให้มีค่าใช้จ่ายในการประมวลผลที่สูง โซลูชันการแสดงผลฝั่งเซิร์ฟเวอร์จำนวนมากไม่ล้างข้อมูลก่อนเวลาอันควร ทำให้ TTFB ล่าช้า หรือส่งข้อมูลซ้ำ

(เช่น สถานะแบบอินไลน์ที่ JavaScript ใช้ในไคลเอ็นต์) ใน React การเรียกใช้ renderToString() อาจช้าเนื่องจากเป็นแบบซิงโครนัสและแบบ Single-Thread

API DOM ของเซิร์ฟเวอร์ React เวอร์ชันใหม่กว่า

รองรับการสตรีม ซึ่งจะช่วยให้ส่วนเริ่มต้นของการตอบกลับ HTML ไปยังเบราว์เซอร์ได้เร็วขึ้นในขณะที่ส่วนที่เหลือยังคงสร้างอยู่ในเซิร์ฟเวอร์

การทำให้การแสดงผลฝั่งเซิร์ฟเวอร์ "ถูกต้อง" อาจเกี่ยวข้องกับการค้นหาหรือสร้างโซลูชัน สำหรับการแคชคอมโพเนนต์ การจัดการ การใช้หน่วยความจำ การใช้เทคนิคการจดจำ และข้อกังวลอื่นๆ คุณมักจะประมวลผลหรือสร้างแอปเดียวกันซ้ำ 2 ครั้ง ครั้งหนึ่งในไคลเอ็นต์และอีกครั้งในเซิร์ฟเวอร์ การแสดงผลฝั่งเซิร์ฟเวอร์ที่แสดงเนื้อหาเร็วขึ้นไม่ได้หมายความว่าคุณจะมีงานน้อยลง หากคุณมีงานจำนวนมากในฝั่งไคลเอ็นต์หลังจากที่การตอบกลับ HTML ที่เซิร์ฟเวอร์สร้างขึ้นมาถึงไคลเอ็นต์ ก็อาจยังส่งผลให้ TBT และ INP ของเว็บไซต์สูงขึ้นได้

การแสดงผลฝั่งเซิร์ฟเวอร์จะสร้าง HTML ตามคำขอสำหรับแต่ละ URL แต่จะช้ากว่าการแสดงเนื้อหาแบบคงที่ที่แสดงผลแล้ว หากคุณสามารถทำงานเพิ่มเติมได้ การแสดงผลฝั่งเซิร์ฟเวอร์บวกกับการแคช HTML จะช่วยลดเวลาในการแสดงผลของเซิร์ฟเวอร์ได้อย่างมาก ข้อดีของการแสดงผลฝั่งเซิร์ฟเวอร์ คือความสามารถในการดึงข้อมูล "สด" เพิ่มเติมและตอบสนองต่อชุดคำขอที่สมบูรณ์กว่า การแสดงผลแบบคงที่ หน้าเว็บที่ต้องมีการปรับเปลี่ยนในแบบของคุณ เป็นตัวอย่างที่ชัดเจนของคำขอประเภทที่ทำงานได้ไม่ดีกับการแสดงผลแบบคงที่

การแสดงผลฝั่งเซิร์ฟเวอร์ยังช่วยให้คุณตัดสินใจได้อย่างน่าสนใจเมื่อสร้าง PWA ฉันควรใช้การแคชService Worker แบบเต็มหน้าหรือแสดงผลเนื้อหาแต่ละส่วนบนเซิร์ฟเวอร์

การแสดงผลฝั่งไคลเอ็นต์

การแสดงผลฝั่งไคลเอ็นต์หมายถึงการแสดงผลหน้าเว็บในเบราว์เซอร์โดยตรงด้วย JavaScript ตรรกะ การดึงข้อมูล การสร้างเทมเพลต และการกำหนดเส้นทางทั้งหมดจะได้รับการจัดการใน ไคลเอ็นต์แทนที่จะเป็นในเซิร์ฟเวอร์ ผลลัพธ์ที่มีประสิทธิภาพคือระบบจะส่งข้อมูลเพิ่มเติมไปยังอุปกรณ์ของผู้ใช้จากเซิร์ฟเวอร์ และการดำเนินการนี้มาพร้อมกับข้อแลกเปลี่ยนของตัวเอง

การแสดงผลฝั่งไคลเอ็นต์อาจทำและรักษาให้รวดเร็วสำหรับอุปกรณ์เคลื่อนที่ได้ยาก

หากพยายามรักษางบประมาณ JavaScript ที่จำกัด

และส่งมอบคุณค่าในการรับส่งข้อมูล

ให้น้อยที่สุด คุณจะทำให้การแสดงผลฝั่งไคลเอ็นต์เกือบจะจำลอง

ประสิทธิภาพของการแสดงผลฝั่งเซิร์ฟเวอร์ล้วนได้ คุณสามารถทำให้ตัวแยกวิเคราะห์ทำงานได้เร็วขึ้นโดยการส่งสคริปต์และข้อมูลที่สำคัญโดยใช้ <link rel=preload>

นอกจากนี้ เราขอแนะนำให้พิจารณาใช้รูปแบบต่างๆ เช่น PRPL

เพื่อให้การไปยังหน้าเว็บครั้งแรกและครั้งต่อๆ ไปรู้สึกรวดเร็วทันใจ

ข้อเสียหลักของการแสดงผลฝั่งไคลเอ็นต์คือปริมาณ JavaScript ที่ต้องใช้มักจะเพิ่มขึ้นเมื่อแอปพลิเคชันเติบโตขึ้น ซึ่งอาจส่งผลต่อ INP ของหน้าเว็บ ซึ่งจะยิ่งทำได้ยากเมื่อมีการเพิ่มไลบรารี JavaScript ใหม่ๆ Polyfill และโค้ดของบุคคลที่สาม ซึ่งต้องแข่งขันกันเพื่อใช้กำลังประมวลผลและมักจะต้อง ประมวลผลก่อนจึงจะแสดงเนื้อหาของหน้าเว็บได้

ประสบการณ์การใช้งานที่ใช้การแสดงผลฝั่งไคลเอ็นต์และอาศัย JavaScript Bundle ขนาดใหญ่ ควรพิจารณาการแยกโค้ดอย่างละเอียด เพื่อลด TBT และ INP ในระหว่างการโหลดหน้าเว็บ รวมถึงการโหลด JavaScript แบบ Lazy Loading เพื่อ แสดงเฉพาะสิ่งที่ผู้ใช้ต้องการเมื่อจำเป็น สําหรับประสบการณ์การใช้งานที่มีการโต้ตอบน้อยหรือไม่มีเลย การแสดงผลฝั่งเซิร์ฟเวอร์อาจเป็นโซลูชันที่ปรับขนาดได้มากกว่าสําหรับปัญหาเหล่านี้

สำหรับผู้ที่สร้างแอปพลิเคชันหน้าเว็บเดียว การระบุส่วนหลักของอินเทอร์เฟซผู้ใช้ที่หน้าเว็บส่วนใหญ่ใช้ร่วมกันจะช่วยให้คุณใช้เทคนิคการแคชเปลือกของแอปพลิเคชันได้

เมื่อใช้ร่วมกับ Service Worker จะช่วยปรับปรุง

ประสิทธิภาพที่รับรู้ได้ในการเข้าชมซ้ำอย่างมาก เนื่องจากหน้าเว็บสามารถโหลด HTML ของเปลือกแอปพลิเคชันและทรัพยากร Dependency จาก CacheStorage ได้อย่างรวดเร็ว

การรีไฮเดรตจะรวมการแสดงผลฝั่งเซิร์ฟเวอร์และฝั่งไคลเอ็นต์

ไฮเดรชัน เป็นแนวทางที่ช่วยลดข้อแลกเปลี่ยนระหว่างการแสดงผลฝั่งไคลเอ็นต์และฝั่งเซิร์ฟเวอร์ ด้วยการทำทั้ง 2 อย่าง คำขอการนำทาง เช่น การโหลดหน้าเว็บแบบเต็มหรือการโหลดซ้ำ จะได้รับการจัดการโดยเซิร์ฟเวอร์ที่แสดงผลแอปพลิเคชันเป็น HTML จากนั้นระบบจะฝัง JavaScript และข้อมูลที่ใช้ในการแสดงผลลงในเอกสารผลลัพธ์ เมื่อดำเนินการอย่างรอบคอบ วิธีนี้จะช่วยให้ FCP รวดเร็วเหมือนการแสดงผลฝั่งเซิร์ฟเวอร์ จากนั้นจะ "รับ" โดยการแสดงผลอีกครั้งในไคลเอ็นต์

แม้ว่าจะเป็นโซลูชันที่มีประสิทธิภาพ แต่ก็อาจมีข้อเสียด้านประสิทธิภาพที่สำคัญ

ข้อเสียหลักของการแสดงผลฝั่งเซิร์ฟเวอร์ที่มีการรีไฮเดรตคืออาจส่งผลเสียอย่างมากต่อ TBT และ INP แม้ว่าจะปรับปรุง FCP ก็ตาม หน้าเว็บที่แสดงผลฝั่งเซิร์ฟเวอร์อาจดูเหมือนโหลดและโต้ตอบได้ แต่จริงๆ แล้ว ไม่สามารถตอบสนองต่ออินพุตได้จนกว่าจะมีการเรียกใช้สคริปต์ฝั่งไคลเอ็นต์สำหรับคอมโพเนนต์ และแนบตัวแฮนเดิลเหตุการณ์แล้ว ซึ่งอาจใช้เวลาหลายนาทีบนอุปกรณ์เคลื่อนที่ ทำให้ผู้ใช้สับสนและหงุดหงิด

ปัญหาการคืนค่า: แอปเดียวในราคา 2 แอป

เพื่อให้ JavaScript ฝั่งไคลเอ็นต์ดำเนินการต่อจากที่เซิร์ฟเวอร์หยุดไว้ได้อย่างถูกต้อง โดยไม่ต้องขอข้อมูลทั้งหมดที่เซิร์ฟเวอร์แสดงผล HTML อีกครั้ง โซลูชันการแสดงผลฝั่งเซิร์ฟเวอร์ส่วนใหญ่ จะแปลงการตอบกลับจากการอ้างอิงข้อมูลของ UI เป็นแท็กสคริปต์ในเอกสาร เนื่องจากวิธีนี้จะทำซ้ำ HTML จำนวนมาก การรีไฮเดรตจึงอาจทำให้เกิดปัญหามากกว่าแค่การโต้ตอบที่ล่าช้า

เซิร์ฟเวอร์จะแสดงคำอธิบายของ UI ของแอปพลิเคชันเพื่อตอบสนองต่อคำขอการนำทาง แต่ยังแสดงข้อมูลต้นฉบับที่ใช้ในการสร้าง UI นั้น รวมถึงสำเนาที่สมบูรณ์ของการติดตั้งใช้งาน UI ซึ่งจะบูตขึ้นในไคลเอ็นต์ UI จะไม่โต้ตอบจนกว่า bundle.js จะโหลดและดำเนินการเสร็จสิ้น

เมตริกประสิทธิภาพที่รวบรวมจากเว็บไซต์จริงที่ใช้การแสดงผลฝั่งเซิร์ฟเวอร์และการรีไฮเดรตระบุว่าตัวเลือกนี้ไม่ใช่ตัวเลือกที่ดีที่สุด เหตุผลที่สำคัญที่สุด คือผลกระทบต่อประสบการณ์ของผู้ใช้ เมื่อหน้าเว็บดูพร้อมใช้งาน แต่ไม่มีฟีเจอร์แบบอินเทอร์แอกทีฟ ใดๆ ที่ใช้งานได้

การแสดงผลฝั่งเซิร์ฟเวอร์พร้อมการรีไฮเดรตยังคงมีความหวัง ในระยะสั้น การใช้การแสดงผลฝั่งเซิร์ฟเวอร์สำหรับเนื้อหาที่แคชได้สูงเท่านั้นที่จะช่วยลด TTFB ได้ ซึ่งจะให้ผลลัพธ์คล้ายกับการแสดงผลล่วงหน้า การคืนค่าทีละรายการ แบบค่อยเป็นค่อยไป หรือบางส่วนอาจเป็นกุญแจสำคัญที่ทำให้เทคนิคนี้มี ความเป็นไปได้มากขึ้นในอนาคต

สตรีมการแสดงผลฝั่งเซิร์ฟเวอร์และรีไฮเดรตแบบค่อยเป็นค่อยไป

การแสดงผลฝั่งเซิร์ฟเวอร์มีการพัฒนาหลายอย่างในช่วง 2-3 ปีที่ผ่านมา

การแสดงผลฝั่งเซิร์ฟเวอร์แบบสตรีมมิง

ช่วยให้คุณส่ง HTML เป็นกลุ่มที่เบราว์เซอร์แสดงผลแบบค่อยเป็นค่อยไปได้เมื่อได้รับ ซึ่งจะช่วยให้ส่งมาร์กอัปไปยังผู้ใช้ได้เร็วขึ้นและเพิ่มความเร็ว FCP ใน React สตรีมที่เป็นแบบอะซิงโครนัสใน renderToPipeableStream() เมื่อเทียบกับ renderToString() แบบซิงโครนัส หมายความว่ามีการจัดการแรงดันย้อนกลับได้ดี

การรีไฮเดรตแบบค่อยเป็นค่อยไป ก็เป็นอีกวิธีที่ควรพิจารณา (React ได้นำไปใช้แล้ว) แนวทางนี้จะช่วยให้ "บูต" ส่วนต่างๆ ของแอปพลิเคชันที่ฝั่งเซิร์ฟเวอร์ทีละส่วน เมื่อเวลาผ่านไป แทนที่จะใช้วิธีทั่วไปในปัจจุบันที่เริ่มต้นแอปพลิเคชันทั้งหมด พร้อมกัน ซึ่งจะช่วยลดปริมาณ JavaScript ที่จำเป็นต่อการทำให้หน้าเว็บมีการโต้ตอบได้ เนื่องจากช่วยให้คุณเลื่อนการอัปเกรดฝั่งไคลเอ็นต์ของส่วนที่มีลำดับความสำคัญต่ำของหน้าเว็บเพื่อป้องกันไม่ให้บล็อกเทรดหลักได้ ทำให้การโต้ตอบของผู้ใช้เกิดขึ้นได้เร็วขึ้นหลังจากที่ผู้ใช้เริ่มการโต้ตอบ

การรีไฮเดรตแบบค่อยเป็นค่อยไปจะช่วยให้คุณหลีกเลี่ยงข้อผิดพลาดในการรีไฮเดรตการแสดงผลฝั่งเซิร์ฟเวอร์ที่พบบ่อยที่สุดข้อหนึ่งได้ นั่นคือการทำลายและสร้างทรี DOM ที่แสดงผลฝั่งเซิร์ฟเวอร์ขึ้นมาใหม่ทันที ซึ่งมักเกิดขึ้นเนื่องจากการแสดงผลฝั่งไคลเอ็นต์แบบซิงโครนัสครั้งแรกต้องใช้ข้อมูลที่ยังไม่พร้อมใช้งาน ซึ่งมักเป็น Promise ที่ยังไม่ได้รับการแก้ไข

การคืนน้ำบางส่วน

การคืนค่าบางส่วนพิสูจน์แล้วว่าใช้งานได้ยาก แนวทางนี้เป็นส่วนขยายของการรีไฮเดรตแบบค่อยเป็นค่อยไป ซึ่งจะวิเคราะห์แต่ละส่วนของหน้าเว็บ (คอมโพเนนต์ มุมมอง หรือทรี) และระบุส่วนที่มีการโต้ตอบน้อยหรือไม่มีการตอบสนอง สำหรับส่วนที่แทบจะไม่เปลี่ยนแปลงเหล่านี้แต่ละส่วน ระบบจะแปลงโค้ด JavaScript ที่เกี่ยวข้องเป็นข้อมูลอ้างอิงที่ไม่มีการโต้ตอบและ ฟีเจอร์ตกแต่ง ซึ่งจะลดร่องรอยฝั่งไคลเอ็นต์ให้เหลือเกือบเป็นศูนย์

การใช้วิธีเติมน้ำบางส่วนก็มีปัญหาและการประนีประนอมในตัว ซึ่งทำให้เกิดความท้าทายที่น่าสนใจในการแคช และการนำทางฝั่งไคลเอ็นต์หมายความว่าเราไม่สามารถสมมติว่า HTML ที่เซิร์ฟเวอร์แสดงผลสำหรับส่วนที่ไม่มีการใช้งานของแอปพลิเคชันจะพร้อมใช้งานโดยไม่ต้องโหลดทั้งหน้า

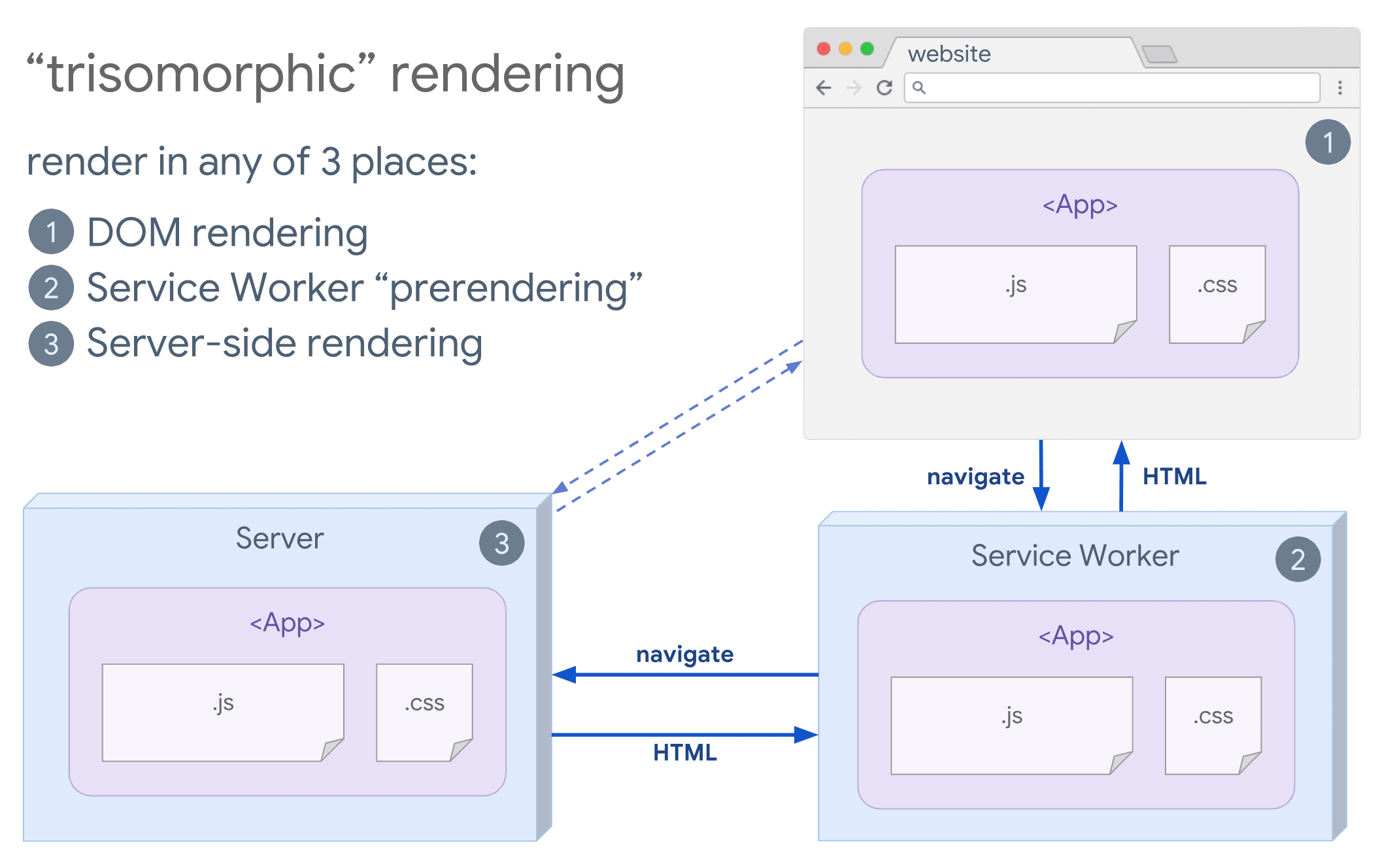

การแสดงผลแบบไตรสัณฐาน

หากService Worker เป็นตัวเลือกสำหรับคุณ ให้ลองใช้การแสดงผลแบบTrisomorphic เทคนิคนี้ ช่วยให้คุณใช้การแสดงผลฝั่งเซิร์ฟเวอร์แบบสตรีมสำหรับการนำทางครั้งแรกหรือการนำทางที่ไม่ใช่ JavaScript จากนั้นให้ Service Worker ทำหน้าที่แสดงผล HTML สำหรับ การนำทางหลังจากติดตั้งแล้ว ซึ่งจะช่วยให้คอมโพเนนต์และเทมเพลตที่แคชไว้เป็นข้อมูลล่าสุดอยู่เสมอ และเปิดใช้การนำทางสไตล์ SPA เพื่อแสดงผลมุมมองใหม่ในเซสชันเดียวกัน แนวทางนี้จะดีที่สุดเมื่อคุณแชร์โค้ดเทมเพลตและการกำหนดเส้นทางเดียวกันระหว่างเซิร์ฟเวอร์ หน้าไคลเอ็นต์ และ Service Worker ได้

ข้อควรพิจารณาเกี่ยวกับ SEO

เมื่อเลือกกลยุทธ์การแสดงผลเว็บ ทีมมักจะพิจารณาผลกระทบของ SEO การแสดงผลฝั่งเซิร์ฟเวอร์เป็นตัวเลือกยอดนิยมในการมอบประสบการณ์ที่ "ดูสมบูรณ์" ซึ่ง Crawler สามารถตีความได้ Crawler เข้าใจ JavaScript แต่ก็มักมีข้อจำกัด ในการแสดงผล การแสดงผลฝั่งไคลเอ็นต์ใช้ได้ แต่โดยทั่วไปต้องมีการทดสอบและค่าใช้จ่ายเพิ่มเติม เมื่อเร็วๆ นี้ การแสดงผลแบบไดนามิก ยังเป็นอีกตัวเลือกที่ควรพิจารณาหากสถาปัตยกรรมของคุณขึ้นอยู่กับ JavaScript ฝั่งไคลเอ็นต์เป็นอย่างมาก

บทสรุป

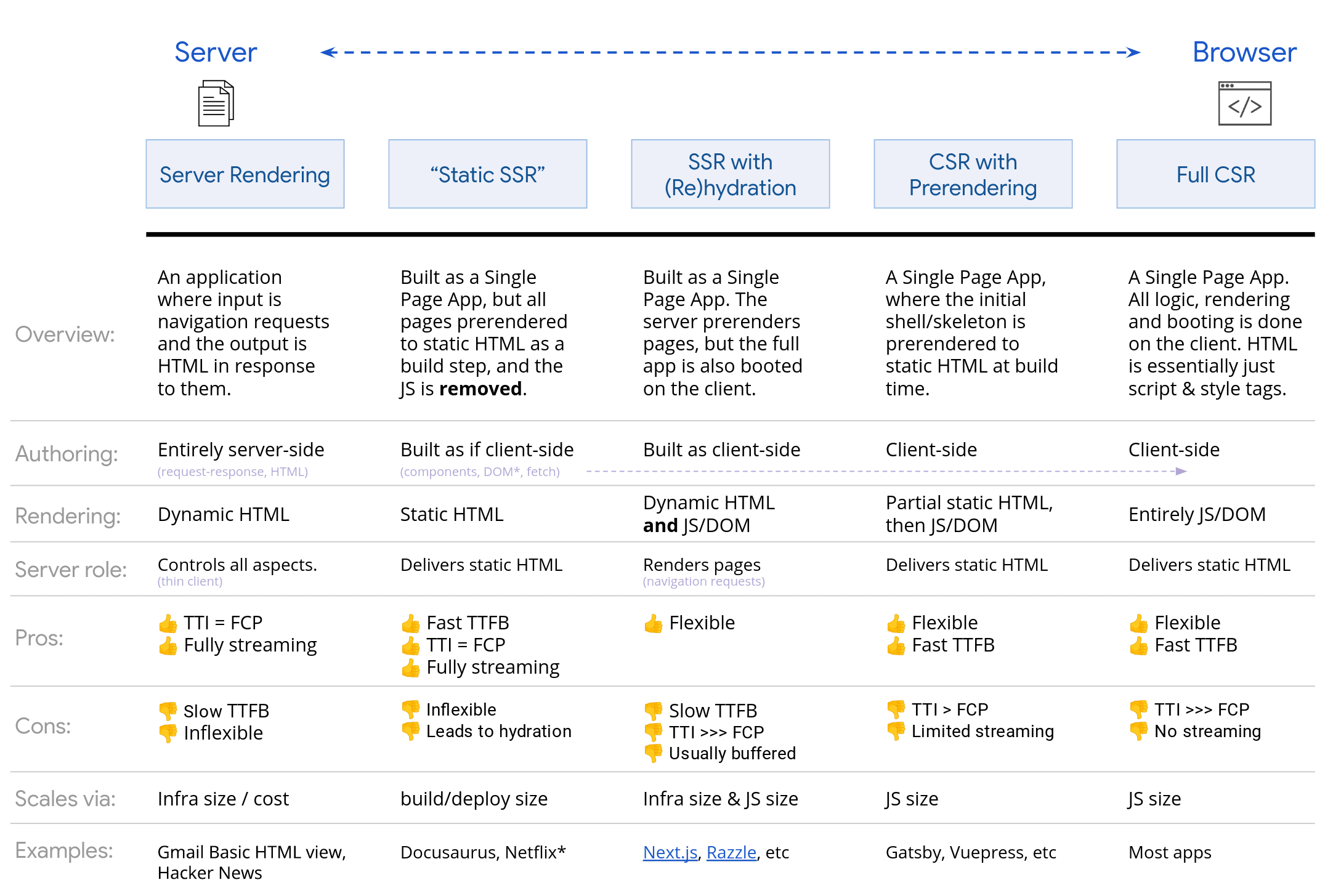

เมื่อตัดสินใจเลือกแนวทางการแสดงผล ให้วัดผลและทำความเข้าใจว่าคอขวดของคุณคืออะไร พิจารณาว่าการแสดงผลแบบคงที่หรือการแสดงผลฝั่งเซิร์ฟเวอร์จะช่วยให้คุณ ไปถึงเป้าหมายได้หรือไม่ คุณสามารถจัดส่ง HTML เป็นหลักโดยมี JavaScript น้อยที่สุดเพื่อให้ประสบการณ์การใช้งานเป็นแบบอินเทอร์แอกทีฟ อินโฟกราฟิกที่มีประโยชน์นี้แสดง สเปกตรัมของเซิร์ฟเวอร์และไคลเอ็นต์

เครดิต

ขอขอบคุณทุกคนที่เขียนรีวิวและให้แรงบันดาลใจ

Jeffrey Posnick, Houssein Djirdeh, Shubhie Panicker, Chris Harrelson และ Sebastian Markbåge